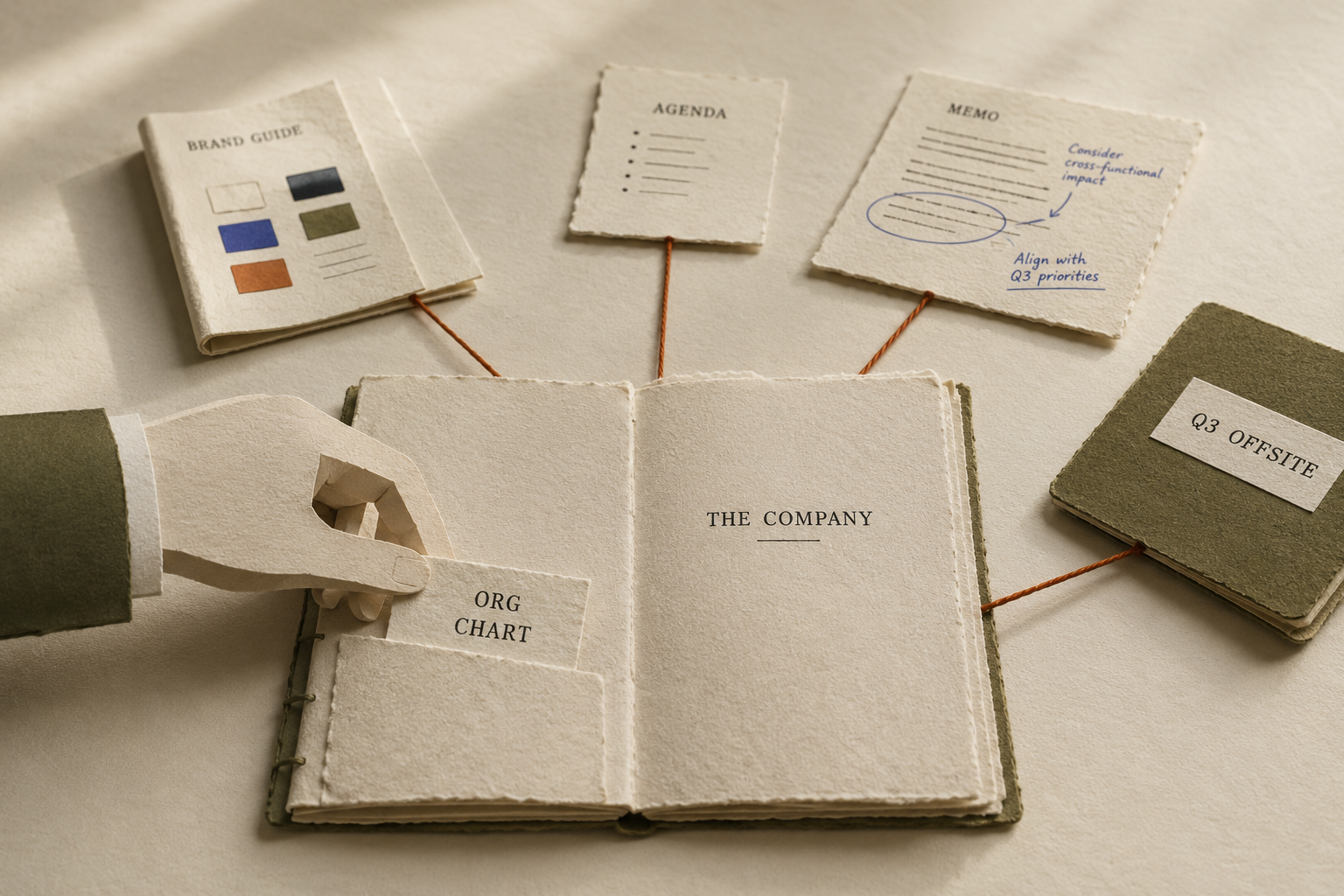

¶It is Tuesday morning at a 250-person company. The CEO opens Claude for the third time today. Before she can ask the question she opened it for, she has to remind it who Marcus is, what the new pricing tier replaces, and why the Atlanta office is on hold. Two floors down, the head of marketing reuploads the brand guide because last week she was working in ChatGPT and this week she is in Copilot. The chief of staff answers the same three questions from the same three people he answered yesterday. None of this is a failure of intelligence. It is intelligence being asked to start over.

Every restart is the company paying for the same lesson twice.

¶Forrester reported in late 2025 that fifty-five percent of enterprises regret an AI deployment they made the year before. Gartner projects that by 2027, more than half of agentic AI projects in production will be canceled. The polite read is buyer's remorse on inflated promises. The honest read is operational. The product worked in the demo because the demo had context. The product disappoints in production because the production environment has no memory of the company, and the company has no system for getting that memory into the room.

¶There is a name for the discipline of getting that memory into the room. People in technical circles have started to call it context engineering. The word is doing more work than it sounds like. Strip the engineering connotation and the meaning is plain. Context engineering is the work of making sure the AI knows what your company knows, every time, without anyone retyping it.

¶Context, in this sense, is not a buzzword. It is everything an experienced colleague would know before answering a question. Who reports to whom, and what the new pricing tier replaces. Which proposals are still in play, and which customer used to be enthusiastic but has gone quiet. Which decision leadership made at the September offsite, and which one they walked back in November. A new hire takes six months to learn this, but the AI is being asked to learn it before lunch, every time the user opens a new chat.

¶A real context engineering practice gives the company three things it does not currently have.

- ¶One place where the company writes down what it knows. Most companies have it scattered across the CEO's head, the chief of staff's notebook, a deck from the Q3 offsite, and a Slack thread from last March. The fix is one record the company can point at and say, this is what is currently true.

- ¶Rules for what is current and what has been replaced. The marketing playbook from 2024 is not the marketing playbook now. The org chart from January is missing two reorgs. The AI does not know which version is operative unless someone tells it. The company needs a system that knows the difference and updates itself when leadership makes the call.

- ¶A way for every AI tool to read from the same record. The CEO's morning chat, the RevOps dashboard, the customer-success assistant, and the analyst's research tool should all be reading from the same source, not from seven separate vendor memories that each know one slice of the company and contradict each other on the rest.

¶One concession worth making. Some vendors are starting to add memory features inside their own products. ChatGPT remembers what it learned from you, Notion AI remembers what is in your Notion, and Microsoft Copilot remembers what is in your Microsoft tenant. Each is real, and none of them is the record. Each is a vendor's record of you, kept inside the tool that vendor sells, useful only as long as you keep paying for that tool and only inside that tool. A vendor that keeps your company's context inside its own product has not built a context layer for your company. It has built a switching cost for itself.

A vendor that keeps your company's context inside its own product has not built a context layer for your company. It has built a switching cost for itself.

¶The system of record for a company's context cannot live inside the AI vendor. It has to live where the company controls it. That is the work Maasv was built to do. One record of what the company knows, owned by the company, sitting outside any AI tool, readable by all of them. The CEO's morning chat reads from it, the brand guide lives in it, and the chief of staff updates it after the offsite so that the next morning every tool the company pays for is current.

¶The reader who runs a company has been told for two years that better prompts are the answer. Better prompts are not the answer. A better prompt to an amnesiac AI gets you a faster wrong reply. The answer is the answer it has always been when an organization wants to scale beyond what one person can hold in their head. Write the company's knowledge down somewhere durable, keep it current as the company changes, and let every tool the company uses read from that record instead of guessing at what is still true.

¶Tuesday morning at the 250-person company is going to come around again next week. The question is whether the CEO opens her AI and retypes the org chart, or opens it and asks the question she actually opened it for.